How to Make AI Video with Your Own Character

Three approaches, real comparisons, and a step-by-step walkthrough. By the end of this guide you'll know exactly which tool to pick and why.

Direct answer

To make an AI video featuring your own character, you have three options: image-to-video in Runway/Pika/Sora, character LoRA fine-tuning in Stable Diffusion or ComfyUI, or a creator-focused tool with built-in consistency like NuuMee. For most creators, option three is the only practical path — it preserves character identity across scenes without requiring technical setup.

TL;DR

- Image-to-video (Runway, Pika, Sora): cheapest, fastest, but character drifts after one scene.

- Character LoRA fine-tuning: best technical quality, but requires GPU, ComfyUI, and 50+ hours of learning.

- Creator tools (NuuMee): consistent quality with zero technical setup. Best for 90% of creators.

- Cost: option 1 ranges $10-50/mo, option 2 is free + hardware, option 3 starts at $29/mo (NuuMee).

- Time to first video: option 1 ~10 min, option 2 ~50 hours, option 3 ~3 minutes.

Who this guide is for

You should read this if you're:

- →A TikTok/Instagram/YouTube creator who wants your face (or a custom character) in AI-generated viral content

- →A marketing agency producing UGC-style ads with consistent brand characters across campaigns

- →A coach or course creator who needs to appear on camera without filming every week

If you're a VFX professional with technical chops, this guide will help you evaluate trade-offs — but you may end up preferring option 2 (LoRA fine-tuning) for maximum control.

The 3 ways to make AI video with your character

Image-to-video models

Runway Gen-4, Pika 2.0, Sora 2

Upload one reference image, write a prompt describing the scene, get a 5-10 second video. This is the lowest-friction approach and the one most creators try first.

- Fastest setup (10 min)

- No learning required

- Output quality is decent for one-off scenes

- Character drifts after first scene

- Premium pricing ($15-30/mo)

- Sora requires ChatGPT Plus + waitlist

Best for: one-off experiments, single-scene videos, creators who don't need multi-shot consistency.

Character LoRA fine-tuning

Stable Diffusion + ComfyUI workflows

Train a custom LoRA (Low-Rank Adaptation) model on 20-50 photos of your character. The trained model can then generate consistent character images, which feed into a video model. This is the technical creator's path.

- Highest quality consistency

- Full control over style + identity

- Free if you have a GPU

- 50+ hours of ComfyUI learning

- Requires NVIDIA GPU (8GB+ VRAM)

- Hours of training per character

Best for: technical creators, VFX artists, people who already use Stable Diffusion daily.

Creator tools with built-in consistency (recommended)

NuuMee

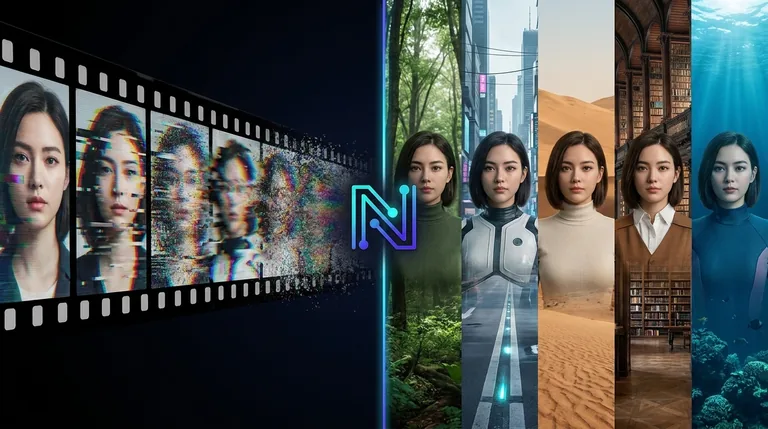

Tools built specifically for character-consistent generation. NuuMee aggregates the best AI video models (VEO 3.1, Kling, Hailuo) behind one interface designed around character preservation. Upload one reference photo, generate as many videos as you want — same character every time.

- Consistency by default, every time

- No technical setup at all

- Access to multiple models in one place

- Predictable cost ($29/mo)

- Less granular control than LoRA

- Monthly subscription (not pay-as-you-go)

Best for: solo content creators, agencies, coaches — anyone who wants the output without the learning curve.

Step-by-step: making your first character video in NuuMee

This is the recommended path for most creators. End-to-end: 5 minutes from signup to downloaded video.

1. Upload your character

One clear photo of your face (or any reference image). Front-facing works best. Higher resolution = better consistency. NuuMee accepts JPG, PNG, or WebP up to 10MB.

2. Describe your scene

Plain English. "Walking through Tokyo at night holding coffee" or "Sitting at a desk explaining a product." No prompt-engineering language required — NuuMee handles the model-specific prompting.

3. Choose scene parameters

Length (5-30 seconds), aspect ratio (9:16 for Reels/TikTok, 16:9 for YouTube), and style preset (cinematic, casual, animated). Sensible defaults if you skip this.

4. Generate

NuuMee routes your request to the best model for the task and queues generation. Typical wait: 1-3 minutes. Studio tier gets priority queue access.

5. Iterate if needed

Don't love the first take? Regenerate with the same character reference. Each variant uses different model seeds but preserves identity. Most creators land on a usable take within 2-3 generations.

6. Download and edit

MP4 export (H.264, no watermark on paid tiers). Drop into CapCut, Premiere, DaVinci, or any video editor for cuts, captions, and music. Or post directly to TikTok/Reels.

NuuMee vs Runway vs Sora vs Pika

The numbers and feature parity for character-consistent video generation. Updated May 2026.

| Feature | NuuMee | Runway | Sora 2 | Pika 2.0 |

|---|---|---|---|---|

| Character consistency | Built-in, primary feature | Limited (motion brush helps) | Limited | Weak |

| Learning curve | Minutes | Weeks | Hours | Minutes |

| Entry price | $0 free / $29/mo paid | $15/mo | $20/mo (via ChatGPT Plus) | $10/mo |

| API access | Enterprise | Yes | No | Limited |

| Models accessible | VEO 3.1, Kling, Hailuo, more | Gen-4 only | Sora 2 only | Pika 2.0 only |

| Max video length | 30s + scene chaining | 10s per generation | 20s | 10s |

| Commercial use | All paid tiers | Pro plan | ChatGPT Plus | Pro plan |

| Waitlist required | No | No | Yes | No |

When to use each approach

Character consistency across the multiple shots you'll post per week.

NuuMee for speed + consistency, Runway if your client needs cinematic VFX-level shots and budget allows.

The only tool built around character preservation as the core feature.

Cheapest way to try the medium with no commitment.

Most granular controls — worth the learning curve for one big project.

You have the skills; go for maximum quality control.

Frequently asked questions

Will my character look exactly the same in every video?

Can I use multiple characters in one video?

What if I don't like the first result?

How long does generation take?

Can I use AI-generated characters instead of my own face?

Are there content restrictions?

What is the difference between "character consistency" and "identity locking"?

Can I make videos longer than 30 seconds?

Related reading

Try character video generation free

25 free credits. No credit card. See your character in an AI-generated scene in under 5 minutes.